I first wrote an article on Facebook ads A/B testing back in March 2017. In 2 years, Facebook’s advertising tools have changed, competition has skyrocketed, and advertisers have grown more skilled in making people stop (scrolling) and click (the Shop Now button).

I still agree with most of what I wrote back in 2017. However, no matter if you’re just starting out with Facebook ads or rely on what you learned a couple years ago, revising the latest best practices will help to get up to speed with high-ROI testing.

And if you’re not yet running split tests on your online ad campaigns…

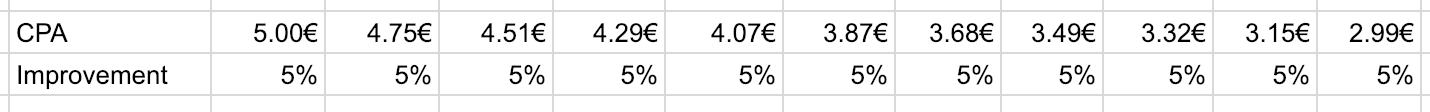

Well… Consider improving your cost-per-result 5% with each of 10 consecutive tests. If your CPA is around 5€, it would drop to 3€ after 10 x 5% improvements. That is close to half of the initial CPA!

Here’s a quick overview of best practices that will help you get high ROI out of your Facebook A/B tests. You’ll find a detailed explanation on each point below.

- Test high-impact ad campaign elements

- Test one campaign element at a time

- Prioritise your A/B test ideas

- Test a reasonable number of variables

- Make sure your split tests are statistically valid

- Calculate the right budget for each A/B test

- Focus on the right metrics & conversion events

- Run as many (good) split tests as you can

But before we jump to all the best practices, here’s what is wrong with many of the “We did an A/B test that improved our results by 1000%” clickbait articles in many (sadly, including the top) marketing blogs.

Key mistakes in Facebook ad A/B testing

Back in 2017, I read an article about a Facebook ad A/B test of 26 design variations.

The article drew several conclusions, showing which ads outperformed others. However, there was no word about the experiment’s setup and budget.

Which left me wondering… Were the test results even statistically valid?

Most likely, they weren’t.

However, this wasn’t just one bad example. Many marketers make the same mistake of running Facebook A/B tests without realizing their results are skewed or statistically insignificant.

Here are some of the key reasons Facebook split tests fail to bring meaningful results:

- Trying to test everything at once – it will be impossible to later tell what tested element resulted in success/failure

- Concluding tests too fast + using too low test budgets – you need a proper amount of conversions to conclude that one variation outperforms the other

- Wrong test setup – you need to give each variation equal opportunity to deliver results

- Testing low-variance elements – changing one line or word in your ad creative will not result in meaningful split test results

Ok, so how to run Facebook ad A/B tests that actually help to improve your CPAs and reduce cost?

⬇⬇⬇

Rule #1: Test high-impact ad campaign elements

Not all your split testing ideas are gold. 💡💡💡≠💰

And with limited marketing budgets, you’ll need to find the test elements that have the highest ROI.

When searching for Facebook ad A/B testing ideas, think which ad element could have the highest effect on your click-through and conversion rates.

AdEspresso studied data from over $3 millions worth of Facebook Ads experiments and listed the campaign elements with highest split testing ROI:

- Countries

- Precise interests

- Mobile OS

- Age ranges

- Genders

- Ad images

- Titles

- Relationship status

- Landing page

- Interested in

However, take this list with a huge grain of salt. As you already know your target audience’s locations and demographics, this list becomes irrelevant to your A/B testing strategy.

Instead, you might want to split test the following Facebook campaign elements:

- Ad visual

- Ad copy, especially the headline

- Ad delivery objectives

Consumer Acquisition found that images are arguably the most important part of your ads — they’re responsible for 75%-90% of ad performance.

Not surprising, considering that most of an ad placement on Instagram or Facebook mobile newsfeed is filled with…🥁🥁🥁… The image.

So improving your ad visuals is a good place to start from. Here are 25 hacks for improving your Facebook ads’ design.

Here are some more things to test after you run out testing ideas for image, copy, and ad delivery objective:

- Ad placements

- Call-to-action buttons

- Campaign type

Rule #2: Prioritise your A/B test ideas

A/B testing Facebook ads doesn’t only require money, it also requires time.

Even if you’re lucky enough to not be constrained by budget, consider how much time and effort your design/copywriting/PPC team will put into preparing a split test.

You need to carefully consider which split tests to work on first.

The best way to do it is on a prioritisation spreadsheet.

Create a prioritisation table for weighing all your A/B testing ideas. You can assign up to 10 factors with different weight to validate the ideas with highest potential.

If you haven’t created a prioritization framework before, check out the ones by Optimizely and ConversionXL.

Start by A/B testing the most promising ad elements – image source

Rule #3: Test one element at a time

As you get started with Facebook advertising, you’ll realize that there are soooo many things to test: ad image, ad copy, Facebook ad targeting, bidding methods, campaign objective, another ad image, another ad copy, another… You get the point.

The rookie mistake you’re likely to make at this point is to create an A/B test with more than one variable category.

Let’s say you want to test 3 ad images, 3 headlines, and 3 main copies. This makes 3x3x3 = 27 different Facebook ads. It would make much more sense to test one of these ad elements at once, e.g. three different images.

📍 The fewer ad variables you have, the quicker you’ll get relevant test results.

Here’s a great illustration by ConversionXL, showing what will happen if you try to test too much stuff at once.

It gets super messy – Image source

Test one element per split test, and use your prioritisation table to define which one to examine first.

P.S. this doesn’t mean that you should only run 1 experiment at a time. You can have five A/B tests running if you have enough audiences and marketing budget to play around with.

Rule #4: Test a reasonable number of variables

Even when testing a single ad element, you may be tempted to create tens on variations with small alterations.

Here’s an example of an A/B test that overdid the number of tested ad design variables.

It will cost them $1k+ to get valid results – Image source

It doesn’t make sense to test that many ad variations at once as Facebook will either start to auto-optimize the ad delivery too soon or your target audience will see 20+ different ads by you.

That’s going to be one expensive (and most likely, annoying) experiment.

What is a good number of variables per campaign? Ideally, it’s 2 (old vs new version). But you can include up to 10 variables in your test IF you have budget for each test cell to collect minimum of 50 conversions.

In most cases, there is no need for more than 5 ad variables. If you test too similar variations, the test won’t bring meaningful difference between results.

Rule #5: Test 3-5 highly differentiated variations

If you haven’t yet found your perfect ad copy or ad design, you should aim to experiment with highly different ad variations.

It won’t make much difference to your target audience if you change a few words or move your product around in the image a bit.

However, as you test highly differentiated variations, you can get insight about the type of ad design or ad copy people prefer and expand on it later.

For example, at Scoro, we’ve tested many various ad designs to find the one that works best.

First, we A/B tested 3 highly different ad designs

Each of these ad designs has a completely different design angle.

Later, we could use the winning ad variation to develop similar designs for further testing.

Later, we split tested the winning design’s alterations

Here’s the formula:

A/B test 3-5 variables ➡ Find a winning variation ➡ A/B test winner’s alterations

Rule #6: Use the right Facebook campaign structure

Update: We have published a dedicated article on Facebook ad campaign structure. Check it out for improved results. 😉

When testing multiple Facebook ad designs or other in-ad elements, you’ve got two options for structuring your A/B testing campaigns:

- A single ad set

- Multiple single-variation ad sets

Soon after I published this article in 2017, Facebook released the Split Test feature in Ads Manager that helps you to quickly set up a multi-cell split test (option 2).

However, you can still set up A/B tests manually in Ads Manager if that’s what you’re more comfortable with.

Let’s check out both options.

1. A single ad set – all your ad variations are within a single ad set.

A/B test campaign structure 1

The good side of this option is that your target audience won’t see all your ad variations multiple times as with multiple ad sets targeting the same audience.

However, this A/B testing campaign structure has a huge negative side: Facebook will start to auto-optimize your ads and you won’t get relevant results.

Use the single ad set option when launching a set of completely new ad creatives to a new audience to quickly learn what works. And then run A/B tests to improve the initial campaign’s results.

2. Multiple single-variation ad sets – each ad variation is in a separate ad set.

A/B test campaign structure 2

As you place every ad variation in a separate ad set, Facebook will treat each ad set as a different entity and won’t auto-optimize based on little results.

That is the best option for getting relevant experiment results. This is the setup you will get when using Facebook’s Split Test feature.

Rule #7: Make sure your test results are valid

Do you know when’s the best time to analyze your A/B test results and conclude the experiment?

Is it three days after the campaign activation? Five days? Two weeks?

Or what would you do if Variation A had the CTR of 0.317% and Variation B the CTR of 0.289%?

For example, how would you conclude the experiment below? 👇

Would you conclude this A/B test?

Truth be told, the test above should not be concluded yet as there isn’t enough data to really tell which variation performed best.

To make sure your A/B tests are valid, you’ll need to have a sufficient amount of results to draw conclusions.

The best way to guarantee the quality of your Facebook ad test results is to use a calculator, a very specific kind of calculator.

Rule #8: Calculate statistical significance

If you want your Facebook tests to give valuable insights, put them through an A/B significance test to determine if your results are valid.

Test your test’s validity – Image source

Instead of website visitors, enter the no. of impressions to a specific ad variation or ad set. Instead of web conversions, enter the no. of ad clicks or ad conversions.

Look for a confidence level of 90% and more before you consider one variable to win over the other.

Tip: Wait at least 72h after publishing before evaluating your split test results. Facebook’s algorithms need some time to optimize your campaign and start delivering your ads to people.

According to an article on ConversionXL, there’s no magical number of conversions you need before concluding your A/B test.

However, I’d suggest that your collect at least 100 clicks/conversions per variation before pausing the test. Even better if you’re able to collect 300 or 500 conversions per each variation.

Rule #9: Know what budget you’ll need

The logic is simple: The more ad variations you’re testing, the more ad impressions and conversions you’ll need for statistically significant results.

So, what’s the best formula for calculating your Facebook ad budget?

What’s your perfect Facebook testing budget?

It’s quite simple:

Average Cost-per-conversion x No. of Variations x Needed Conversions

Start by looking at your other Facebook campaigns and defining your average cost-per-conversion.

Let’s say your goal is to get people clicking on your Facebook ad and the average cost-per-click for past campaigns has been $0.8.

Let’s continue the hypothesizing game, and say you’re looking to split test 5 different ad variations.

To get valid test results, you’ll need around 100-500 conversions per each ad variation.

So, the formula to calculate your budget would be:

$0.8 x 5 x 300 = $1,200

Now, before you bury all the hopes of getting statistically significant A/B test results, consider this:

You can cheat a little.

If one of your test variations is outperforming others by a mile, you can conclude the experiment sooner. (You should still wait for at least 50 conversions on each variation.)

Rule #10: Track the right metrics

As you look at you Facebook A/B test results, there will be lots of metrics to consider: ad impressions, cost-per-click, click-through-rate, cost-per-conversion, conversion rate…

Which metrics should you measure in order to discover the winning ad variation?

It’s not the cost-per-mile or click-through rate. These are the so-called vanity metrics that give you no real insight into your campaign’s performance.

Always track the cost-per-conversion as your primary goal.

Cost-per-conversion is your single most important ad metric as it tells you how much it costs you to turn a person into a lead or customer. And most of the time, increasing sales is the ultimate goal in your Facebook ad strategy.

Facebook ad split testing rulebook

To sum up, here’s the list of all the Facebook split testing rules discussed in this article. 👀

Rule #1: Test high-impact ad campaign elements

Rule #2: Prioritise your A/B test ideas

Rule #3: Test one campaign element at a time

Rule #4: Test a reasonable number of variations

Rule #5: Test highly differentiated variations

Rule #6: Use the right Facebook campaign structure

Rule #7: Make sure your A/B test results are valid

Rule #8: Calculate statistical significance

Rule #9: Know your test budget in advance

Rule #10: Track the right metrics

And, finally, keep some tests running all the time. Small improvements will incrementally lead to big improvements.